Empirical Gaussian Processes

Feb 1, 2026· ,,,·

0 min read

,,,·

0 min read

Jihao Andreas Lin

Co-first author

,Sebastian Ament

Co-first author

Louis Tiao

David Eriksson

Maximilian Balandat

Eytan Bakshy

Abstract

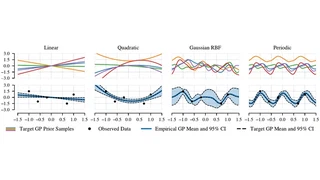

Gaussian processes (GPs) are powerful and widely used probabilistic

regression models, but their effectiveness in practice is often limited by

the choice of kernel function. This kernel function is typically

handcrafted from a small set of standard functions, a process that requires

expert knowledge, results in limited adaptivity to data, and imposes strong

assumptions on the hypothesis space. We study Empirical GPs, a principled

framework for constructing flexible, data-driven GP priors that overcome

these limitations. Rather than relying on standard parametric kernels, we

estimate the mean and covariance functions empirically from a corpus of

historical observations, enabling the prior to reflect rich, non-trivial

covariance structures present in the data. Theoretically, we show that the

resulting model converges to the GP that is closest (in KL-divergence

sense) to the real data generating process. Practically, we formulate the

problem of learning the GP prior from independent datasets as likelihood

estimation and derive an Expectation-Maximization algorithm with

closed-form updates, allowing the model to handle heterogeneous observation

locations across datasets. We demonstrate that Empirical GPs achieve

competitive performance on learning curve extrapolation and time series

forecasting benchmarks.

Type

Publication

Proceedings of the 43rd International Conference on Machine Learning (ICML 2026)